Introduction

Radar systems have evolved tremendously since World War II where they were used only for aircraft/ship surveillance, navigation and missile guidance. The wavelength of the electromagnetic (EM) waves which are transmitted by the radar system used to be in the order of several metres, which was appropriate for bigger aircrafts, ships and submarines. The receiving antennas used to be physically steered. Also, these radars used to be manned by human operators for range and direction estimation, as digital computers were not powerful enough. Radars are now used in many applications including civilian aviation, navigation, mapping, meteorology, radio astronomy and medicine. More recently, radar has emerged as one of the key technologies in autonomous driving systems providing environmental perception in all weather conditions [3] and [4]. Radars are used in advanced driver assistance systems (ADAS) such as automatic emergency braking (AEB) and adaptive cruise control (ACC). The significant progress in the radio-frequency (RF) CMOS technology that enables high-level radar on chip integration has reduced the automotive radar cost to the level of consumer mass production.

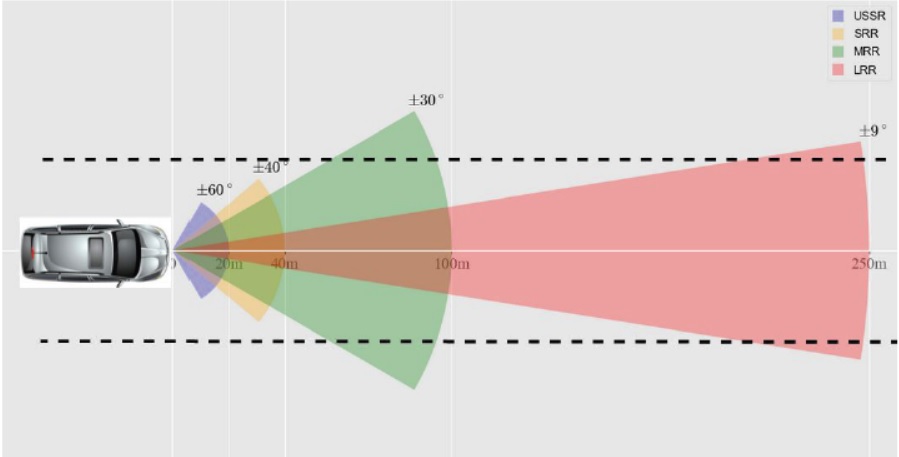

Historically, radars are classified as short range radars (SRR), mid range radars (MRR) and long range radars (LRR) based on their performance and end applications. SRR sensors having a detection range of 45 m and azimuth field of view (FoV) of [−40°, 40°] are deployed for blind spot detection, MRR sensors having a detection range of 100 m and azimuth FoV of [−30°, 30°] are deployed for AEB and lane change assistance and LRR sensors having a detection range of 250 m and azimuth FoV of [−9°, 9°] are deployed for ACC. Another class of radars are recently introduced called ultra short range radars (USRR)s which have an azimuth FoV of [−60°, 60°] and have a range of upto 20 m are used for autonomous parking and side looking applications.

All radar sensors in a vehicle are connected to an electronic control unit for tracking and sensor fusion. SRR sensors and MRR sensors were until recently operating at 24 GHz frequency band. A new band at 79 GHz has been allocated for the long term development of these radars. Radars are also being used within the vehicles for a host of applications like child occupancy detection, drowsy and fatigued driver detection and warning and gesture based control.

Fig. 1. Different types of radar sensors in use in automotive applications

Radars and cameras play an important role in autonomous vehicles. Automotive radars have multiple advantages over camera and lidars such as long operating range, immunity to lighting and weather influences, ability to operate behind non-transparent facia and direct measurement of target velocity at the sensor level makes it very important for autonomous driving, where the perception system not only needs to understand where the vehicles are but also how fast they are moving. Camera-based systems need to take a series of measurements for velocity estimation, while also expending considerable computational resources for running algorithms like feature extraction, optical flow and optionally SLAM. Also, since cameras project the 3D world onto a 2D plane, the quality of geometric measurements using cameras deteriorate rapidly with distance. Small errors in pixel measurements for an object far away result in large depth estimation error. A radar is therefore a great compliment to a camera-based perception system in this respect.

Although many radars exist in the industry today that offer all these advantages above, a high-resolution imaging radar offers additional advantages like:

- Detection of small targets (for e.g. a child, cyclists etc)

- Improved detection in the elevation dimension (useful for bridges, tunnels etc).

- Simultaneous long-range detection + detailed point cloud in the short range.

- Much better ability to distinguish between target types.

- Lower false alarm rate.

- Ego-motion estimation.

High resolution imaging radars called as 4D imaging radars, capture the environment in 3D and time being the 4th dimension, capturing the information of speed of the oncoming and receding vehicles. 4D imaging radars have overcome the main challenge of resolution as the current radars are of low resolution compared to the resolution of cameras and LIDARs. They can capture the object in both azimuth and elevation and can classify objects into bus, cars, motorbikes or pedestrians and in which direction they are moving.

Operation challenges with automotive radars

Variety of scenarios

Automotive radars are required to operate in a variety of scenarios from metropolitan, urban, to rural and freeway environments. These scenarios are characterized by a wide spectrum of targets with different degrees of radar cross section, velocity and motion pattern: pedestrians, animals, buildings, foliage, bridges, road debris etc. Automotive radars are required to detect, localize, track and classify from slow moving pedestrians and animals in a parking lot to fast moving vehicles in a freeway. Consequently, this wide range of velocities challenges the waveform design, chirp configuration and frame size. Also, the tradeoffs between Doppler ambiguity, maximum range and range resolution challenges the system design.

The radar sensor needs to deal with a wide variety of targets ranging from low RCS road debris to high RCS metallic bridge. This requires a high dynamic range (DR). Also the requirement for high DR is the simultaneous detection of far and small objects and a close and large object. As a result, high DR dictates the effective number of bits (ENOB) and the cost of the analog-to-digital converters (ADCs) increases with DR. Targets in a typical urban scenario consist of a variety of targets at different FoV which poses a computational complexity in detecting and tracking them.

Automotive radars are required to provide a high-resolution 4D information of the surroundings: the 3D position of the target and the 4th dimension consists of time providing the information about the target speed. This information is used to identify obstacles, hence the spatial resolution offered by the radars should be of the order of 0.1 degrees which is comparable to some of the high resolution offered by the LIDARs available in the market. The automotive radars need to support a wide variety of safety functions under various operating conditions like cold temperature and hot weather conditions which challenge the optimization of radar system parameters.

In dense urban environments the radar experiences multipath effects which leads to estimation errors generating ghost objects. Multipath mitigation involves processing with additional computation complexity.

Shape of the objects

Automotive radars need to present an accurate image of the surroundings to the perception unit of the host vehicle which can take the correct decisions. It is not only sufficient to detect, localize and track the targets but to provide the shape of the targets and possibly classify them as pedestrians, motorbikes, trucks etc. is needed. These tasks require high resolution in range, doppler and angle dimensions to achieve lidar like performance. The high resolution requirement increases the computational complexity of radar signal processing and there is a need to develop low complexity efficient algorithms which can work in real-time. Improved azimuth and angular resolution is achieved by a large antenna array aperture with increasing transmit and receive antennas. This demands more computation to process data from so many receivers. Also, the large antenna aperture leads to increase in form factor, difficulty I vehicle integration and increases the system cost as the number of antennas increases.

Interference

Automotive radars suffer from self interference, cross interference from other radars on the same vehicle and interference from other radars in different vehicles. Self interference originates from strong echoes from the vehicle platform, radome, and close proximity ground. These mask short ranges and limit the detection probability on close targets. Self interference also limits the DR and if there is saturation due to these strong reflections, it will lead to increased probability of ghost targets. Different radars mounted in the vehicle will have overlapping FoVs which will lead to one radar interfering on the other. Careful system design and frequency planning needs to be done to avoid this form of interference. Interference from radars in other vehicles depends on distance between the radars, beam pattern, orientation and signal processing scheme. Algorithms to mitigate the interference will lead to increased computational complexity.

Antenna imperfections

To achieve high angular resolution antenna aperture needs to be increased. This is achieved by increasing the number of transmitters and receiver antennas to form a virtual antenna array and using multiple-input and multiple-output (MIMO) signal processing techniques. Hundreds of virtual array elements can be synthesized by cascading multiple radar transceivers each supporting a small number of antennas. All the radar transceivers need to be synchronized so that it is considered as a single unit so that data from all receive antennas can be processed coherently. Clock distribution across multiple transceivers is challenging. Data transmission and ADC sampling also needs to be synchronized. With so many antenna elements and high frequency local oscillator clock routing from the master transceiver to the slave, there will be mismatches which would have to be calibrated and removed before processing the data across the antenna elements. The calibration process is quite challenging. The calibration is typically done in an anechoic chamber with a known point target and capturing the antenna array response from all the directions. The calibration data needs to be recorded for different configurations of the radars like USRR, SRR, MRR or LRR. Finding the angle of the target with a single angle snapshot to a resolution of less than 1 degree requires computationally complex algorithms like orthogonal matching pursuit (OMP) and IAA (iterative adaptive approach).

System test

Before the radars are tested in vehicles, they are first tested in a virtual world where the real world scenarios are created. This is done in a simulation environment to test the performance of various functional blocks. After that, the radar needs to be tested in a real-world scenario. For this the radar data is recorded in real time along with another sensor (typically a camera) which serves as ground truth. Capturing of the radar data from the real-world scenario is in itself a challenging task. 4D imaging radars naturally mean more sensors which increases the data collected per frame. This data should be transported to the processor and stored in the hard disk in the shortest amount of time so that the real world dynamic scene is sampled in the fastest time.

To reduce the data size, innovative solutions like data compression are done before sending the data to the host processor. As an example, our imaging radar unit SRIR256V1, generates 320MB of data per second. This data is compressed and transferred to the processor over a 1Gbps ethernet link. This data along with a synchronized camera feed is recorded and stored on the host processor in a hard disk drive for analysis and algorithm testing and fine tuning.

Safety systems

The imaging radar sensors are used in blind spot detection, cross traffic alerts and emergency braking systems. Any sensor imperfection or measurement errors will have an adverse impact on the safety and the satisfaction of the user which has to be taken into account while designing safety systems from these sensors. To mitigate the risks arising from these imperfect sensors, these are required to be tested periodically so that their health check is maintained. The software which controls the safety system needs to ensure that the sensor is healthy and its measurements are safe to be used in dynamic driving scenarios. These tests are automatic and inbuilt into the sensors. Once the self-tests are triggered by the user, everything is automatically run and the user can decide if the sensor measurements can be safely used by the safety function.

High resolution radar signal processing

The huge amount of data after receiving on the host processor needs to be processed, valid points need to be detected and detected points need to be tracked and classified. The entire process must be completed in the shortest amount of time so that the information of the vehicle surroundings are provided which enables suitable reaction in the dynamic driving scenario.

Modern 4D imaging radars need computing power of the order of a teraflop (TFLOPS) which means the ability to process one trillion floating point operations per second which is far greater than what a traditional DSP can offer. With the recent announcement of high performance edge computing devices from Nvidia, it has enabled some of these applications in software on these platforms.

Solution

Steradian’s SRIR256V1 (see Fig. 2) module is the first generation radar sensor consisting of 256 virtual channels and can operate as a USRR, SRR, MRR or LRR sensor by reconfiguring itself with different waveform design, chirp configuration and antenna configuration. The point cloud of the sensor under different scenarios are shown below in figures 3 and 4. There are innovative solutions used at each stage of the signal processing chain right from waveform design, frame configuration, data collection and high resolution processing to achieve a point cloud which is comparable to a lidar point cloud.

Fig. 2. Steradian’s SRIR256V1 module consisting of 256 virtual channels

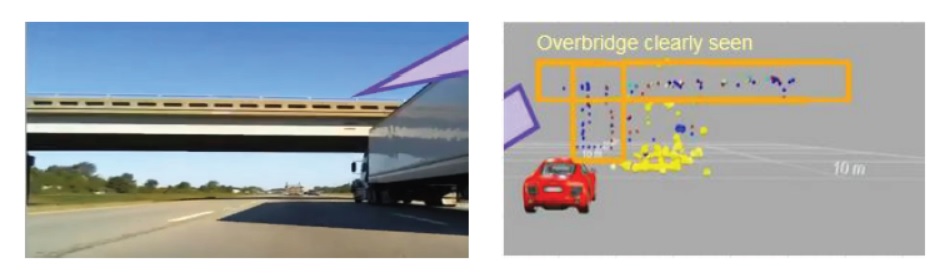

Fig. 3. Urban driving scenario. Traffic cones are clearly seen in the radar point cloud

Fig. 4. Freeway scenario. Overbridge is clearly seen in the radar point cloud.

Conclusions

Automotive radars have a huge potential as becoming a primary sensor in the development of future autonomous vehicles. They have many advantages over camera and lidar sensors. To achieve high resolution so as to replace lidar sensors, automotive radars have to overcome a number of challenges. There are a lot of innovations happening currently in this area specifically to meet the requirements of high resolution imaging radars within the limitation of form factor design and system cost. Some solutions are available in the market which meet the requirements of high resolution imaging radars.

Authors

Shubham Paul, Software Engineer (Imaging Radar) at Steradian Semiconductors. He has 4+ years of experience in indoor autonomous robotics and ADAS domain. In his previous assignment, he has worked on developing ADAS perception systems at Visteon and before that developing control software for Autonomous Ground Vehicles (AGVs) at Grey Orange.

Sumeer Bhatara, Director of Engineering at Steradian Semiconductors. He has over 18 years of experience in developing software for embedded systems. Prior to joining Steradian, he was a member of group technical staff and led the firmware engineering group for mmWave Radars at Texas Instruments. He has broad technology background ranging from DSL systems, 2G/3G modems, GPS, Wireless LAN Communications and recently mmWave sensing. His expertise is in systems engineering, signal processing, software engineering, hardware-software and analog-digital co-design. His interest is in developing technologies and products in embedded space to solve real life problems.

(The views and opinions expressed in this article are those of the authors and do not necessarily reflect the position of Mobility Matrix).